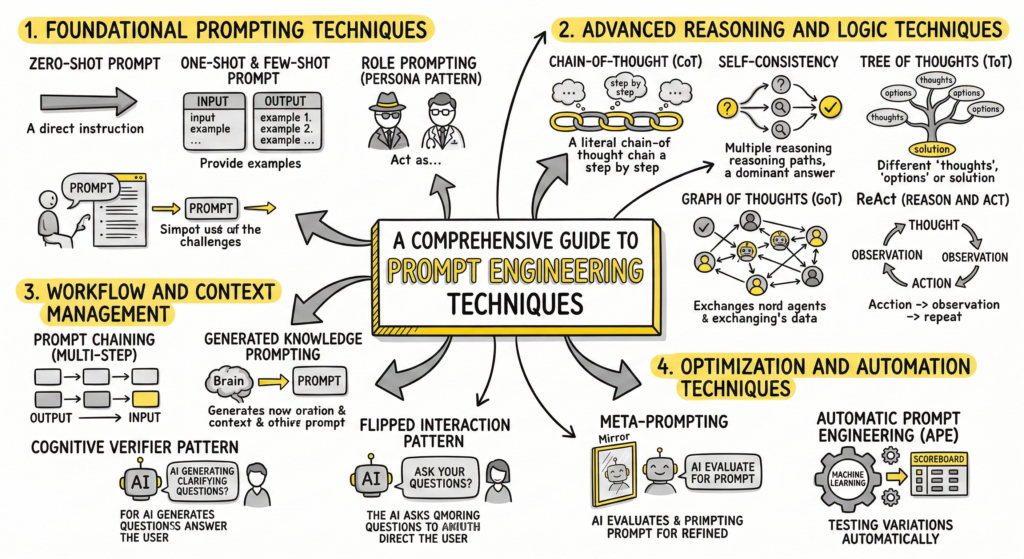

A Comprehensive Guide to Prompt Engineering Techniques

Prompt engineering is the strategic art and science of communicating with Large Language Models (LLMs) to elicit accurate, relevant, and highly specific outputs. As AI models grow more sophisticated, practitioners have developed a wide array of techniques to guide their behavior. Below is a detailed guide to the most effective prompting techniques, ranging from foundational basics to advanced reasoning frameworks.

Foundational Prompting Techniques

These are the core methods for instructing an AI model, typically used for straightforward tasks.

- Zero-Shot Prompting: This technique involves giving the AI a direct instruction without providing any prior examples or context. The model relies entirely on its pre-trained knowledge to fulfill the request.

- Best for: Simple translations, general knowledge questions, and basic summarization.

- Limitations: It can lead to generic or unpredictable outputs if the instruction is ambiguous.

- One-Shot and Few-Shot Prompting: To improve accuracy and control formatting, you provide the model with one or a small number of carefully crafted examples (input-output pairs) within the prompt.

- Best for: Tasks requiring specific tone, style, or highly structured outputs. The AI essentially infers the underlying “rule” from your demonstrations.

- Role Prompting (Persona Pattern): This involves assigning a specific persona or profession to the AI (e.g., “Act as a senior financial advisor” or “You are a seasoned detective”).

- Best for: Biasing the AI’s vocabulary, tone, and analytical frameworks to sound like an expert in a specific domain, generating highly tailored content.

Advanced Reasoning and Logic Techniques

For complex problem-solving, math, and logic, LLMs require structured guidance to prevent hallucinations and computational errors.

- Chain-of-Thought (CoT) Prompting: CoT encourages the AI to break down complex problems and explain its reasoning step-by-step before arriving at a final answer. Adding a simple phrase like “Let’s think step by step” (Zero-Shot CoT) forces the model to generate intermediate thought processes, significantly improving logical accuracy.

- Self-Consistency: Used alongside CoT, this technique asks the model to generate multiple independent reasoning paths for the same prompt. The system then selects the most frequent or logically consistent answer. This drastically reduces variability and enhances confidence in the answer.

- Tree of Thoughts (ToT): Mimicking human brainstorming, ToT prompts the AI to explore multiple reasoning branches (thoughts) simultaneously. The model evaluates the viability of each path, critiquing its own ideas before converging on the optimal solution.

- Graph of Thoughts (GoT): Going a step further than ToT, GoT structures problem-solving as a network of interconnected reasoning steps. Nodes represent independent “reasoning agents” that exchange information and refine hypotheses collectively, making it ideal for highly complex investigative scenarios.

- ReAct (Reason and Act): This paradigm forces the AI to interleave reasoning traces with actionable steps. The model formulates a thought, takes an action (like executing a web search or checking a database), observes the result, and repeats the cycle until it reaches a factual conclusion.

Workflow and Context Management

When dealing with large goals, complex user intents, or massive datasets, workflow techniques help maintain AI focus and coherence.

- Prompt Chaining (Multi-Step Prompting): Instead of overwhelming the AI with a massive, multifaceted prompt, prompt chaining breaks the task down into sequential subtasks. The output of the first prompt is fed as the input or context for the second prompt, ensuring logical coherence and allowing humans to validate the output at each step.

- Generated Knowledge Prompting: Before asking the AI to answer a complex query, you first prompt it to generate foundational background knowledge or facts about the topic. This self-generated context is then fed back into the main prompt, allowing the model to make more accurate and informed predictions.

- Cognitive Verifier Pattern: When a user asks an ambiguous or complex question, this pattern instructs the AI to pause and generate a series of clarifying sub-questions. Once the user answers these sub-questions, the AI combines the context to produce a highly accurate final response.

- Flipped Interaction Pattern: Rather than the user directing the AI, this technique instructs the AI to drive the conversation. The AI is told to ask the user questions one at a time until it gathers enough information to successfully complete a specific goal.

Optimization and Automation Techniques

These techniques are used to refine prompts programmatically or leverage the AI to improve its own instructions.

- Meta-Prompting: This is the practice of using prompts to refine prompts. You ask the AI to act as a prompt engineer, evaluating your initial instruction for ambiguities and suggesting clearer, more effective alternatives before actually executing the task.

- Automatic Prompt Engineering (APE): APE uses machine learning algorithms and language models to autonomously generate, score, select, and refine the optimal prompts for a task without manual human intervention. The AI tests variations against predefined evaluation metrics (like clarity or accuracy) to find the statistically best instruction.